ARM Holdings

An Important Strategic Pivot

Yesterday, ARM Holdings held an event called “ARM Everywhere” where they announced a new chip which is optimised for AI Agents and driven by power efficiency as well as performance. In this note, we consider the significance of this announcement.

Introduction.

On July 15, 2025, we wrote a note on ARM Holdings which can be found here.

We outlined the history of the Cambridge, England-based company and, in particular, the importance of the ARM RISC ISA chip standard which has become the main rival to the Intel x86 standard.

Mobile Chip Dominance

ARM’s RISC standard found a growing market in mobile phones where power consumption, and the design flexibility inherent in RISC, was a big advantage.

The mobile phone market grew rapidly (especially after launch of the iPhone in 2007) and the x86 was nowhere. Almost all mobile chips were based on ARM RISC designs. ARM is estimated to have 99% share in smartphone CPUs. This is important because smartphones have to be very power efficient relative to other computers such as desktop PCs.

The total number of ARM based chips shipped to date has been 350bn.

Chip design division of labour

The analyst Jay Goldberg at D2D Advisory utilised an illuminating analogy to explain ARMs offering. Goldberg asks us to think of an architect designing a building. The architect’s value-add come for the overall concept, design and vison. He or she does not get involved in the detail of designing the layout of the plumbing and the electricals etc. For the latter, they just instruct the builders to use appropriate standard well-accepted processes.

The chip designers such as Qualcomm, Nvidia, Samsung and others are like the architect in Goldberg’s analogy. Their value-add comes from the innovative advanced features in Chips. They do not want to waste time on the basic circuit “plumbing” and accepted protocols. They just buy the latter from ARM. Supplying these blueprints is ARM’s main business.

Chip design value chain economics

When a new advanced chip is designed there may be a lot of initial work between the ARM and the Chip designer. ARM receives a one-off lump sum payment for this. This is known as License Revenue.

Once the chip design is completed, it will be produced by the likes of TSMC. ARM will receive a few dollars for each chip manufactured. The amount is such a tiny fraction of the value of the chip, the designer will consider it to be immaterial. This is known as Royalty Revenue.

The latter can be surprisingly small. If a $1,000 smartphone has $50 of ARM content and ARM charges a 5% royalty rate to that customer, ARM gets 5% of the ARM content, or $2.50.

ARM’s business model creates a predictable royalty revenue stream while staying capital-light, focusing on R&D and engineering.

However, the numbers indicate that as suppliers of IP, ARM is capturing only a small part of the value of the chip design value chain. A move into designing chips can be seen as an attempt to capture greater value.

They have been supplying IP for chips for three decades. They provided IP in a standalone form for the CPU, GPU and System IP.

CSS Subsystems

In recent years there was greater need for speed. In response they developed Compute subsystems (CSS).

CSS takes blocks of IP, puts them together in a finished way helping the customers reduce up to 18 months in the time required to get a chip into production.

As part of CSS, ARM Neoverse is a line of CPU architectures designed for datacentres, edge computing, and AI workloads. Neoverse CPUs are built for tasks like cloud computing, 5G networks, and machine learning, offering improved performance and power efficiency. Neoverse was introduced in 2019 and 1.25bn Neoverse cores have been shipped and installed.

AWS, Microsoft Azure, and Google Cloud have each developed their own custom Arm processors –drawn by ARM’s lower power consumption and high core densities.

Yesterday ARM announced another potentially significant change to this business model. They are no longer not be just a supplier of blueprints or IP, they will also design their own chips.

In particular, they announced the ARM AGI CPU, marking a departure from its traditional IP licensing model.

AGI is Artificial General Intelligence

CPU is of course Central Processing Unit

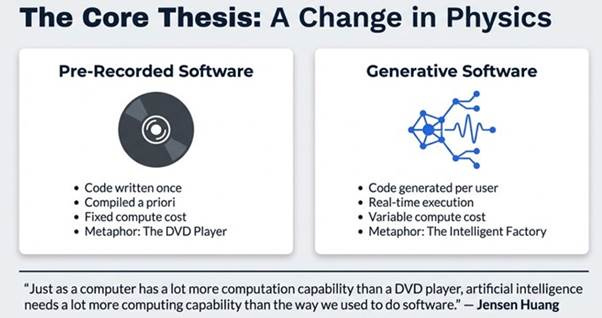

AI is greatly increasing computing demands. In our note on Nvidia on 12th March 2026, We summarised the comments of the Nvidia CEO who noted we are moving from the world of pre-recorded software to a world of Generative software. That note can be found here.

Source: Kristal.ai

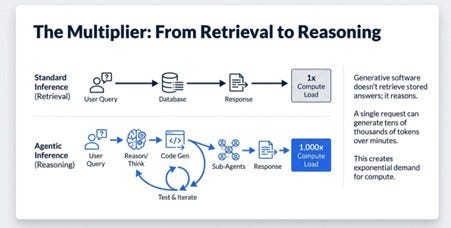

Generative software requires up to 1000X more compute load than traditional pre-recorded software according to Nvidia.

Source: Kristal.ai

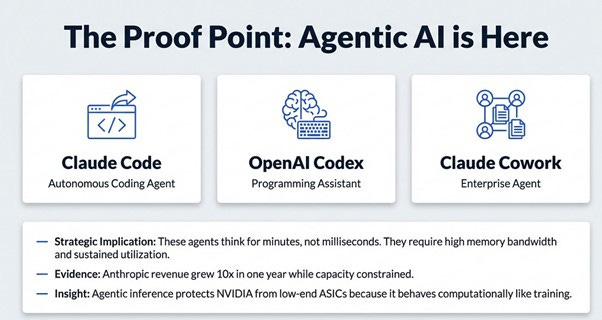

This change has occurred because Anthropic and Open AI have just released tools which will allow millions of workers to do their work much more efficiently. The new era is known as the age of “Agentic AI”.

Agentic AI increases CPU core requirements by 4x (from 30 million to 120 million CPU cores per gigawatt) within existing power envelopes.

Source: Kristal.ai

AI has huge power needs. An AI datacentre power needs are up to 1 gigawatt which is equivalent to the power needs of a medium size US city

According to RAND Corporation research, AI data centres could require 68 gigawatts of power capacity globally by 2027, close to California’s entire power grid. California’s economy, if it were a separate country would be larger than Japan. This is not sustainable. The need of the hour is for much more power efficient computer architectures.

ARM’s strategic pivot is a direct response to the escalating demands of Agentic AI.

The new ARM CPU will be much more power efficient than X86 equivalents.

The new AGI CPU, purpose-built on a 3-nanometer (nm) TSMC process with 136 Neoverse V3 cores and a 300-watt TDP, leverages Arm’s inherent power efficiency to deliver 2x performance per watt compared to x86 equivalents, addressing the critical need for sustainable, high-density compute.

ARM announced key partnerships with industry leaders like Meta and OpenAI and these underscore the strategic importance of the AGI CPU.

Meta, a primary co-collaborator, emphasized the need for a power-conscious and efficient partner to match its exponential compute demand, projecting clusters up to 5 gigawatts.

Meta needs to provide personal computing daily for each one of its 3.5bn users and so they need to create power capacity for 5 US medium sized cities!!!. They started talking to ARM 2.5 years ago and have decided they are a natural partner. They called in a multi-generational partnership. This is a major vote of confidence.

Paul Saab of Meta said of the performance of the new ARM chips “we’re seeing performance that is equal to anything you can buy on the market today at massive performance per watt improvements.”

OpenAI is also a primary collaborator. They highlighted the CPU’s essential role as an orchestrator for agentic workflows and the “infinite demand for intelligence,” noting that “the model that you use today is the worst AI model that you will ever use for the rest of your life,” underlining relentless progress and compute needs.

The ARM software ecosystem, bolstered by over 15 years of investment and contributions from hyperscalers, ensures that AI software not only runs well but often “runs best on Arm,” facilitating broad adoption across diverse customers

ARM is committed to a multi-generational product roadmap for its new silicon, with ARM AGI CPU 2 and ARM AGI CPU 3 already planned, alongside continued investment in its Compute Subsystems (CSS) to accelerate customer time-to-market.

The company projects its Cloud AI business to become its largest segment within a few years, driven by the significant market opportunity presented by agentic AI.

ARM is now addressing a much larger market. The Total Addressable Market (TAM) expands the current $3 billion in Cloud AI royalties to approximately $100 billion in the future, with an overarching goal to address a total TAM exceeding $1 trillion by the end of the decade across the entire compute spectrum from edge to cloud.

Conclusions

Rene Hass in his keynote address at the event presenting the new ARM chip said

“We are now in a new business for ARM, and we are supplying CPUs as chips,” Arm CEO Rene Haas said during a keynote at the ARM Everywhere event in San Francisco today (March 24). “The biggest reason we’re doing this is that our partners have asked for it.”

Agentic AI is leading to a need for many more CPUs and those CPUs have to be much more power efficient.

ARM has announced a huge change in their decades old business model. Nvidia has recently announced its Vera Rubin CPUs. Intel and AMD are dominant players in x86 CPUs.

By this move, ARM is looking to capture more of the value of the chip design process.

It is likely their future revenues will be much higher than current revenues.

The profitability of the company is also likely to improve significantly.

We need to look at the numbers in details but ARM could be a very interesting play on AI revolution.