The AI Infrastructure Stack

15/05/2026

The AI Infrastructure Pack

I read the “AI Infrastructure Stack” by Value and Momentum Portfolio. You can find it here.

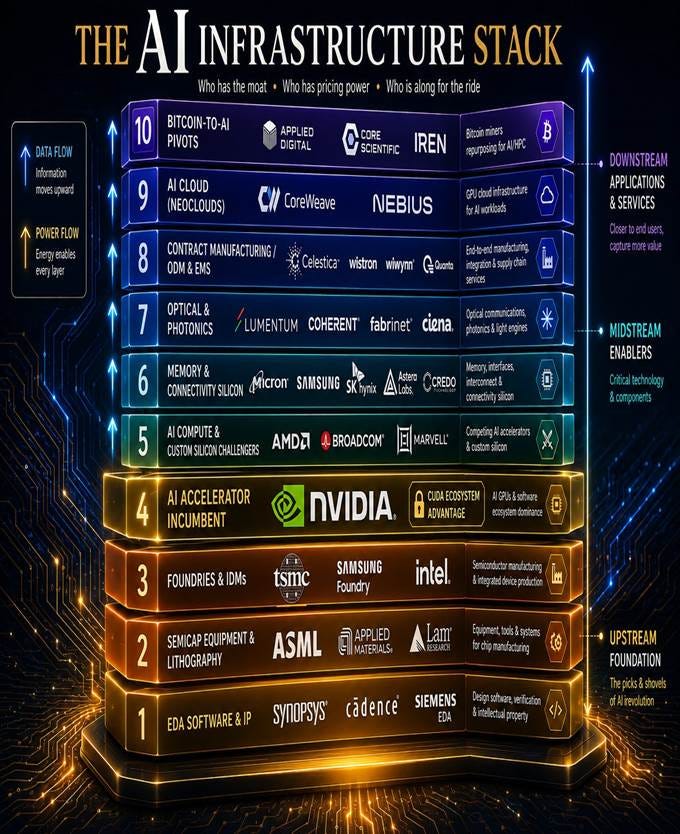

AI has been the main story driving the markets for the last two years. The article illustrated the AI Infrastructure Pack as follows:

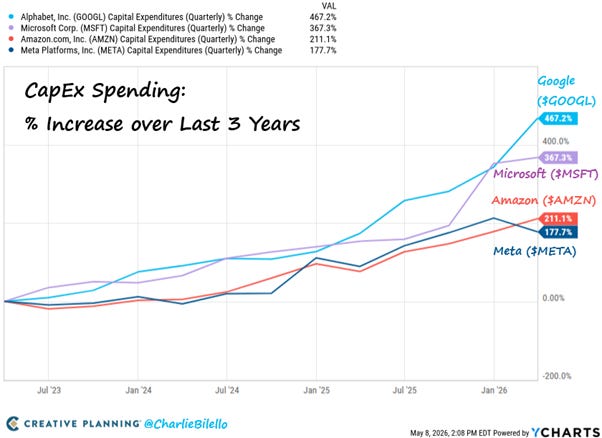

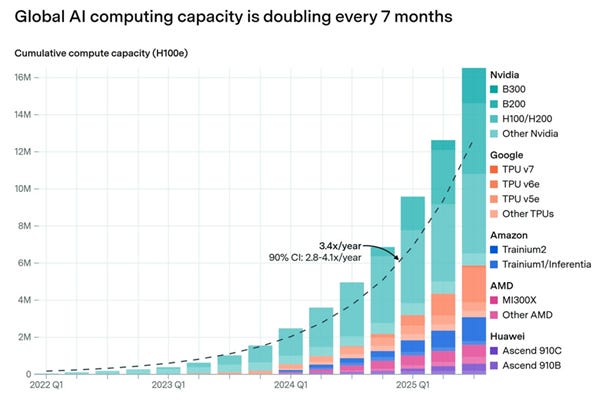

The AI buildout is the largest capital cycle of our generation. Hundreds of billions of dollars a year now flow into the chips, the fabs, the tools that make the chips, the software that designs them, the optical fibers that connect them, and the warehouses that house them. The companies receiving that spend look nothing like each other.

The AI Capex Buildout

The AI Infrastructure stack shows several companies benefitting from the AI spending boom. We have covered and invested in some of the companies mentioned. We have either looked at others but missed them or not looked at them at all.

Nvidia: The centre of the AI stack.

If you imagine the AI economy as a layered stack, these companies populate almost every level except the foundation models themselves. From the bottom up: EDA software designs the chips; equipment makers build the tools that fabricate them; foundries actually manufacture the silicon; chip designers create the products that get fabricated, with NVIDIA dominating that layer; memory, connectivity, and optical specialists complete the package; contract manufacturers assemble the boxes; and at the top, cloud operators rent out compute by the hour.

Nvidia is the most important AI Company, and it is in the middle of the stack. We have covered it many times and we invested in it in 2023 after this initial report and it has been the biggest winner of recent times in our portfolio. It is our largest position.

There is NVIDIA: sitting at the center of the ecosystem, referenced by almost every other company on this list, sometimes as customer, sometimes as competitor, sometimes as both.

Of the twenty other companies in this analysis, almost every single one references NVIDIA in some way. Synopsys’s largest sophisticated investor of 2026 is NVIDIA, which took a 4.8 million share stake. TSMC fabricates every cutting-edge NVIDIA AI chip; Intel just received a $5 billion investment from NVIDIA and is competing with TSMC for its foundry business. Lumentum was hand-picked for a $2 billion direct investment as part of NVIDIA’s $4 billion “optics blitz.” CoreWeave is a deep strategic NVIDIA partner with NVIDIA holding an equity stake; Nebius is a launch partner for new NVIDIA platforms. The Bitcoin pivots (IREN, Applied Digital, Core Scientific) are racing to deploy NVIDIA GPUs by the thousand. AMD, Broadcom and Marvell define their entire competitive position relative to NVIDIA. Micron is the sole U.S. HBM supplier feeding NVIDIA’s AI platforms.

NVIDIA is not just one of the companies on this list, it is the gravitational center the others orbit.

The CUDA software stack, two decades in the making, is the deepest software moat in semiconductors and arguably in all of computing. Every AI researcher and engineer trained on it; every framework optimizes for it. That is why even when Google’s Broadcom-fabricated TPUs are reportedly 40% cheaper to run, hyperscalers still buy NVIDIA in volume.

Summary of the AI stack

Layer 1 of the AI Stack is Electronic Design Automation (EDA)

The two main EDA companies are Synopsys (SNPS) and Cadence Design Systems (CDNS).

We have covered both companies and our most recent reports on both can be found here and here. We invested a small amount in CDNS and it is up 100% in GBP terms.

As is well known, Nvidia has a near 90% market share in GPUs and is seeing huge excess demand. Nvidia does not manufacture chips. The dominant manufacturer of the most complex and advanced chip is Taiwan Semiconductor (TSMC).

Our most report on TSMC can be found here. We invested in it after first report which can be found here, and it is up 132% in GBP terms. It is our fourth largest position.

Once it was clear at Nvidia was going to be a great success, it was likely that TSMC would be a significant beneficiary .

Layer 2 of the AI stock illustrated above is Semiconductor Equipment and Lithography.

The dominant company with a 90% plus market share in Extreme Ultraviolet Lithography (EUV). These are large machines essential for making silicon chips. Some of them cost around $400mn, weigh as much as 33 elephants and takes up to 250 engineers and 3 months to install.

If we were convinced about TSMC, then we should have thought that ASML was likely to be a success too. We knew the company quite well but did not invest. It is up 70% in US dollar terms in the last two years.

In addition, there are three dominant suppliers of chip equipment KLA Tencor (KLA), Applied Materials (AMAT) and Lam Research (LRCX). As we were positive on Nvidia and TSMC, these were likely to be good companies to invest in. We knew them quite well. They are all up at least 150%. It was an expensive miss.

Another miss was Intel (INTC). We did not understand how the AI boom was going to boost CPU demand. We looked at INTC and wrote about it here when it was at $20. We did not invest. It is at $115 a share - almost a 600% gain missed!

We have also looked at Advanced Micro Devices (AMD) which is both a CPU and GPU play. The most recent report on AMD is here. We made a relatively late small investment but even that is up 100% in GBP terms. In the last two years, AMD is up 175%.

AMD also features in Rack 5 of the AI stack along with Broadcom (AVGO) and Marvell (MRVL).

Broadcom’s custom AI chips for Google are reportedly 40% cheaper to run; AMD’s MI308 is ramping;

Amazon, Google and Microsoft are all developing in-house AI silicon. Marvell’s custom ASIC business with Amazon and Microsoft, ramping toward $2B by 2028, exists because hyperscalers want alternatives to NVIDIA.

We have written about AVGO, and the most recent report can be found here. We invested in AVGO after one of these reports and that position is up 38% in GBP terms. In the last two years, AVGO and MRVL are up 300% and 250% in dollar terms respectively

Rack 6 of the AI stack is Memory & Connectivity Silicon

Memory chips are dominated by SK Hynix, Samsung Electronics and Micron (MU). We are old enough to remember the 1980s, when Japanese companies competed to produce memory chips and dumped them below cost. This prompted Intel to withdraw from memory chips. The market was flooded; the Japanese companies suffered a lot of red ink and eventually shuttered their chip divisions. Fast forward to today, the market structure is oligopolistic. Samsung is large multi-product conglomerate which makes many types of consumer devices including leading mobile phones and tablet computers while SK Hynix and Micron (MU) are pure chip plays.

Micron: The only American memory maker

Micron is the most direct beneficiary of the AI memory supercycle of any company in this list other than NVIDIA itself. AI servers consume 8–12x more DRAM than traditional servers, and high-bandwidth memory (HBM) is the most constrained component in the entire stack after GPUs themselves. Micron is one of only three global HBM suppliers, the sole U.S.-based major memory manufacturer, and the sole U.S. HBM supplier feeding NVIDIA’s AI platforms, a status that gives it a unique geopolitical moat as Washington and Beijing decouple their semiconductor supply chains.

We thought memory was still a very cyclical industry and did not understand how much memory demand, especially HBM, the AI investment boom would generate. In the last year, Micron stock is up 542%. It was another big miss.

Stacks 7 to 10 of the AI Infrastructure Stack have several interesting companies. We have not looked at them. We need to do in the future but many of them have already advanced strongly.